Issue #382 - The ML Engineer 🤖

Come Say Hi @ PyCon DE, KAOS Autonomous Extension, Anthropic Tackling Security Risks, How People Use ChatGPT, Linus Torvalds Agentic Guidelines + more 🚀

Come say hello at the PyCon / PyData DE this week!! More information about your talks as well as how to find us in this post as well as in the newsletter highlight below!!

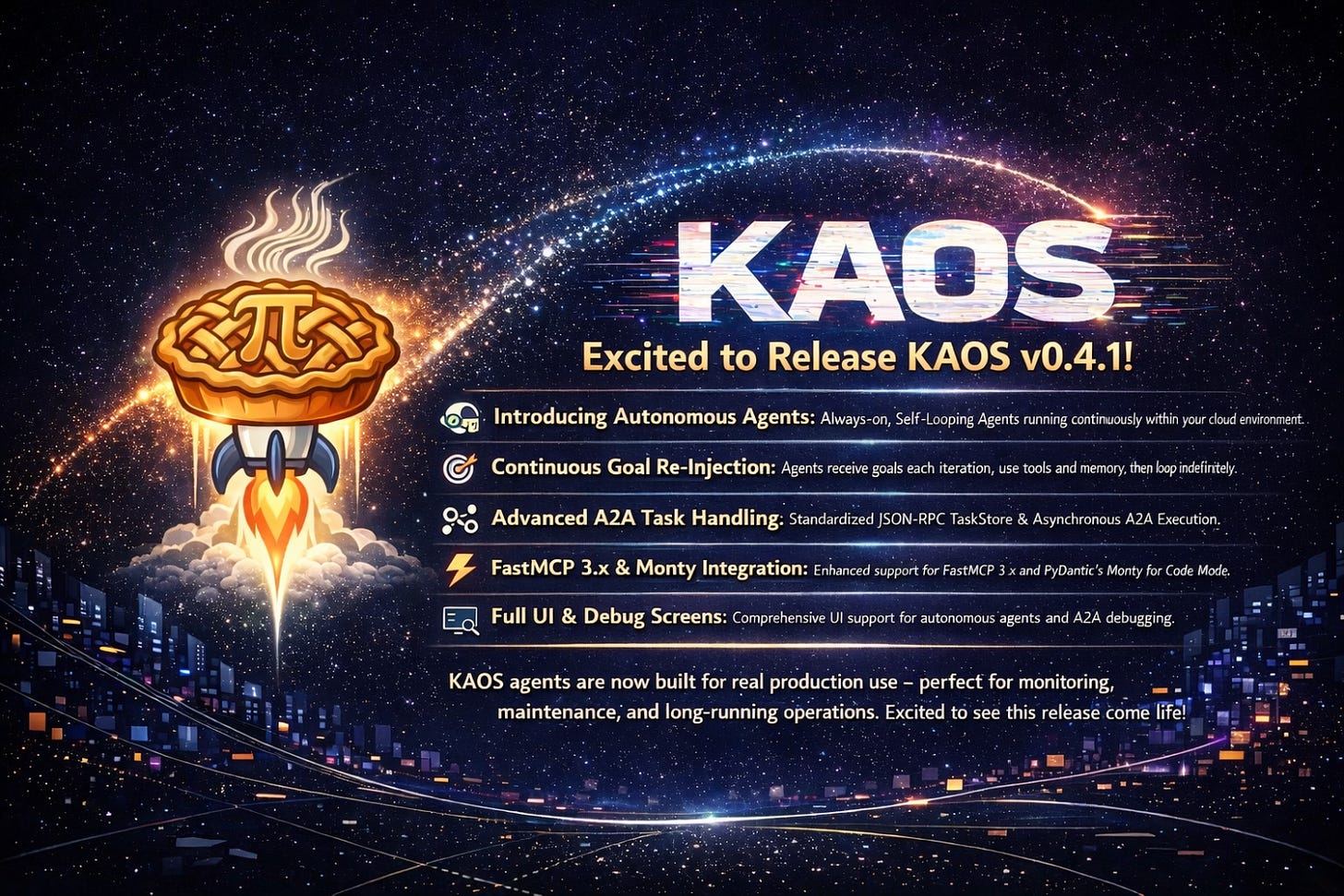

Announcement: Releasing KAOS v0.4.1! Excited for this release which introduces Autonomous Agents 🚀

If you want to support the momentum, do reshare, open an issue, and/or give the repo a star ⭐ https://github.com/axsaucedo/kaos 🔥

Overview Below - This is full OpenClaw-style functionality 😎

This week in ML Engineering:

Come Say Hi @ PyCon DE!

KAOS Autonomous Extension

Anthropic Tackling Security Risks

How People Use ChatGPT

Linus Torvalds Agentic Guidelines

Open Source ML Frameworks

Awesome AI Guidelines to check out this week

+ more 🚀

If you are at PyCon DE & PyData this week, come say hello!

Super excited to announce that we’ll have 6 (yes six!) talks from scientists and engineers (+ alumni) from my group at Zalando Markets AI, Data & Platform Zalando business unit!! The talks will range across production ML, forecasting, and ML platform engineering, between many other exciting topics! Here’s the full list of talks coming up this week:

> Alejandro Saucedo: Production ML across 2015–2035: A Journey to the Past and the Future

> Stefan Birr & Mones Konstantin Raslan: How to compare apples with oranges: Proper evaluation of article-level demand forecasts

> Irena Bojarovska: Foundation Models in Forecasting: Are We There Yet? Lessons from the Trenches > Petar Ilijevski: Zero-Copy or Zero-Speed? The hidden overhead of PySpark, Arrow & SynapseML for inference

> Abdullah Taha: Holistic Optimization: Implementing “Pipeline-as-a-Trial” HPO with Ray and Cloud Infra > Akif Cakir: From Struggling to Mastery: A Practical Guide to Data Pipeline Operations

Make sure you don’t miss any of our talks! Looking forward to the largest Python & AI Conference in Europe - come say hello to any of the Zalando group at the conference!

Excited to release KAOS v0.4.1! This release introduces autonomous agents, which brings the OpenClaw-like design pattern of continuously running agents into your scalable cloud native environment! This means that we are finally able to move KAOS agents from reactive request/response APIs, to agents that can run continuously as always-on operational infrastructure. The main addition was the continous self-looping agent execution, where an agent starts on pod boot, gets its goal re-injected on every iteration, uses tools and memory to reason about the current state, pauses for a configurable interval, and then keeps going indefinitely until the pod is stopped. In practice, that makes KAOS much more compelling for real production use cases like monitoring, maintenance, and long-running cluster operations, rather than just interactive demos. On top of that the new version also introduces a much more sophisticated A2A task lifecycle support via the standardised JSON-RPC endpoint, introducing also a TaskStore which is planned to be extended for distributed execution. This provides a much cleaner split between continuous CRD-driven autonomous mode and budgeted async A2A task mode; we also included FastMCP 3.x support, integration with PyDantic’s Monty for Code Mode, and full UI support for autonomous agents and an A2A debug screen. This is a release that has been in the making for a while - really happy to see this one finally see the light of day!

Anthropic Tackling Security Risks

As I have been reading the Claude Code Mythos system card and the Project Glasswing post this weekend, my take is slightly more controversial: the exponential growth of mythos is hype, but the cyber impact and risks are real (even without Mythos): This made me process and reflect that irrespective of newer and more powerful model releases, it is clear that cyber specialists are now able to unlock expert-level knowledge on any software they exploit, so we’re only seeing how industry is catching up with this disruption. One of the key points that to me is most critical is the impact this is already having and will continue to have in key infrastructure like banking, etc. Additionally and more broadly, this feels like another signal that these models are starting to expand beyond pure software engineering into cyber, data, science, and MLOps; this is also something that I have already seen in my group, where use-cases of auto-research have started to unlock quite a lot of value. And the last point I keep coming back to is measurement: we may need to move from classic DORA metrics toward a more “Semantic DORA” world, where we measure not just delivery output, but the actual quality and semantic value of progress across bugs, incidents, customer pain, and shipped changes.

This is a super interesting paper that gives one of the clearest large-scale views yet into how ChatGPT is actually used in the wild, using privacy-preserving classification over millions of consumer conversations. The headline for production ML practitioners is that the value is not autonomous task execution but decision support: most usage clusters around practical guidance, information seeking, and writing, with work-related usage concentrated in knowledge-intensive roles and especially in activities like documenting information, interpreting it, solving problems, and giving advice. Work use is growing, but non-work use is growing even faster, which suggests the impact surface for LLM products is much broader than enterprise productivity alone. The paper also shows that coding is a much smaller share of overall usage than industry discourse often implies, while writing-heavy workflows remain the strongest work use case. Overall, the results suggest that the biggest near-term opportunity for production systems is not just building agents that “do work”, but building reliable copilots that support decisions, shape drafts, and fit into everyday information workflows across roles and geographies.

Linus Torvalds Agentic Guidelines

The Linux Kernel now has released guidelines for vibe coded contributions! And unsurprisingly it’s one of the most reasonable and down to earth takes I have seen so far: AI-generated kernel code must still follow the standard Linux development, style, submission, and GPL-2.0-only licensing rules. There’s guidelines that AI systems must not add Signed-off-by because only a human can certify the Developer Certificate of Origin; and the human submitter remains fully responsible for reviewing the code, ensuring legal compliance, and accepting accountability for the patch. The main operational addition is traceability through an Assisted-by tag that records the agent, model version, and any specialized analysis tools used, making AI involvement auditable without shifting responsibility away from human maintainers. I am quite keen to see how other projects standardise and embed these guidelines into their projects amid the huge rise of AI slop contributions.

About us

The Institute for Ethical AI & Machine Learning is a European research centre that carries out world-class research into responsible machine learning.